Welcome to the final installment of our series on building reliable AI agents. In Part 1, we covered observability and tracing. In Part 2, we covered prompt management. In Part 3, we covered continuous evaluations. In Part 4, we covered experiments and supervised evals. In Part 5, we covered guardrails. This series distills lessons from our Forward Deployed Engineering team, based on real-world deployments of production agents across industries.

The first five posts focused on building agents that work well: how to instrument, manage, evaluate, and safeguard them. This post covers a different challenge that determines whether your agent actually gets deployed: governance.

Agent security is still not a solved problem. Agents operating with access to internal systems and sensitive data represent serious organizational risk, and shipping one into an enterprise environment means passing compliance and governance reviews. Builders who don't design for this will struggle to get through the door.

Why Enterprise Teams Care About Governance

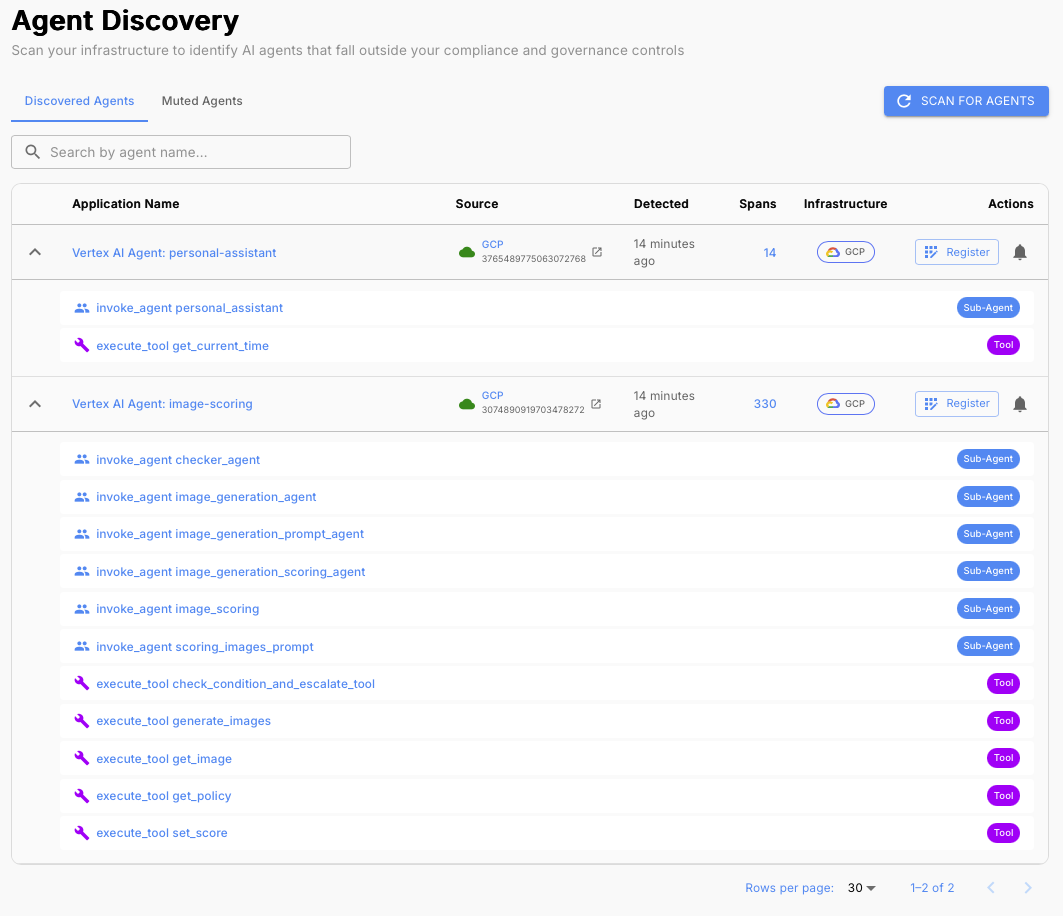

As agent adoption grows, organizations quickly lose track of what agents are running, what data they can access, what tools they can invoke, and who is responsible for them. An unmanaged agent with access to internal systems, customer data, or sensitive APIs represents real organizational risk regardless of how well-built it is.

Enterprise governance teams are responding by requiring that agents meet specific standards before they're allowed to operate in production. If your agent can't demonstrate that it meets those standards, it won't clear review.

Designing for Governance

The good news for builders who followed this series: most of the work is already done. The practices from parts 1 through 4 are the foundation of a governable agent. Here's how to make sure that work translates into enterprise readiness.

Use frameworks with out-of-the-box telemetry and send traces to centralized, well-known locations. Governance tooling discovers agents by finding their telemetry. An agent that emits no traces is invisible to the organization. Agents that emit traces to standard, centralized destinations can be discovered and inventoried automatically, without requiring manual registration.

Instrument thoroughly. Governance teams need to understand the full scope of what an agent can do. Make sure traces capture the agent's tools, subagents, LLM providers, and data sources. An agent with incomplete instrumentation will fail compliance reviews because there's no way to assess its risk surface.

Implement continuous evals and guardrails, and be prepared to demonstrate them. Enterprises will ask what safeguards are in place before allowing an agent to operate in their environment. Being able to show active evals and running guardrails is a meaningful signal of production readiness. Builders who can't demonstrate these controls will face longer review cycles and harder questions.

Assign clear ownership. Every agent should have a named owner accountable for its compliance and behavior. Governance tools will surface this, and enterprises will ask for it. An agent without an owner is an agent without accountability, which is a red flag in any compliance review.

TLDR

- Shipping an agent into an enterprise environment means passing governance and compliance reviews. Builders who don't design for this will struggle to get through the door.

- Use frameworks with out-of-the-box telemetry and send traces to centralized locations so governance tooling can discover your agent automatically.

- Instrument thoroughly so governance teams can assess your agent's full risk surface: tools, subagents, LLM providers, and data sources.

- Implement continuous evals and guardrails, and be prepared to demonstrate them during review.

- Assign clear ownership so there's accountability for your agent's compliance and behavior.

- The work from parts 1 through 4 is the foundation. Builders who do that work are already most of the way to enterprise readiness.

Wrapping Up the Series

This post concludes our five-part series on best practices for building reliable agents. The six practices covered (observability, prompt management, continuous evaluations, experimentation, guardrails, and governance) form a complete foundation for building and operating agents that production environments can trust.

If you're just getting started, Part 1 on observability is the right place to begin. Everything else builds from there.

Interested in building production-ready agents? Connect with me on LinkedIn.

If you'd like to learn more about shipping reliable agents using these best practices, book a demo with an AI expert.

Want to see how we've applied these best practices internally? Check out our agent building stories: How We Turned a Vibe-Coded Jira Bot Into a Reliable Agent in Two Weeks & What "Building an Agent" Actually Means (And Why Most People Get It Wrong).