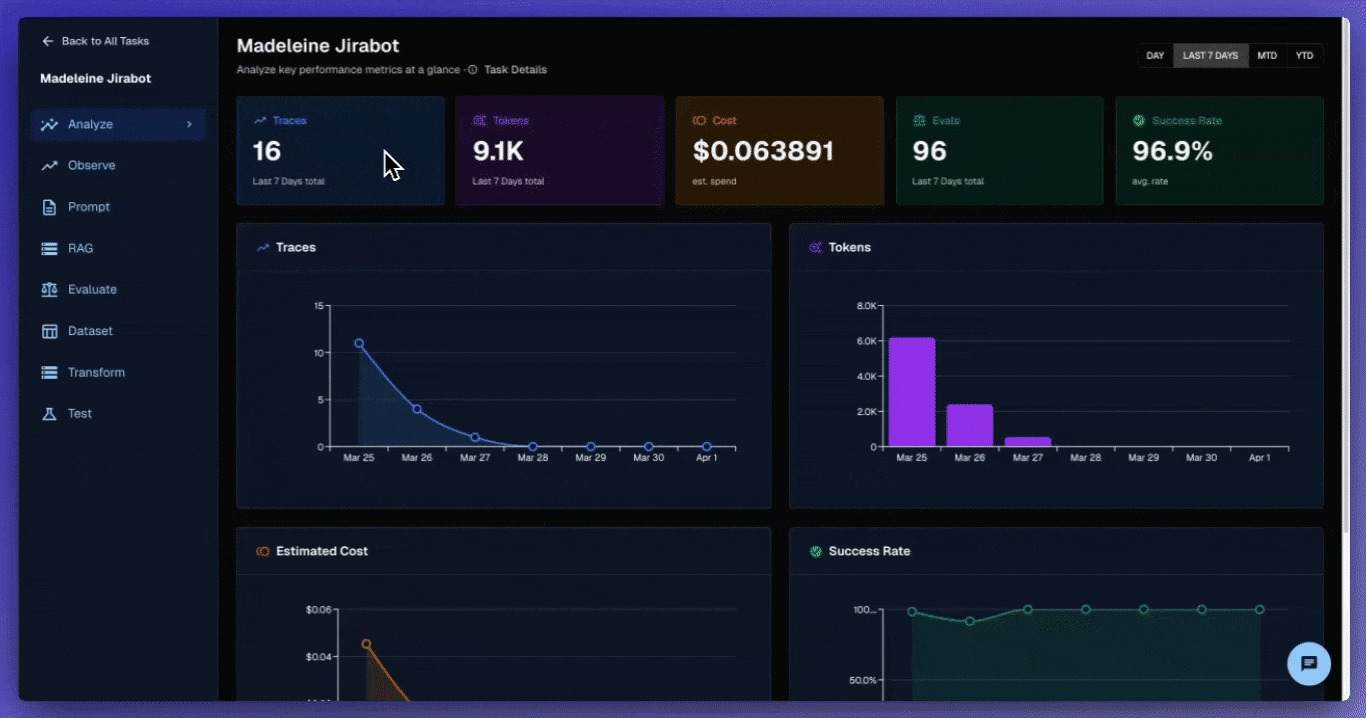

Your team builds agents. Your agents work. But understanding what they're doing? That's where things get messy.

You're clicking through five different screens to trace a single conversation. Your PM is asking why agent costs spiked, but the data lives in three separate dashboards. Your compliance team needs visibility into agent behavior, but they can't navigate the maze of experimental interfaces you've been patching together.

The tools exist. The data exists. But finding what you need when you need it? That's the real problem.

This month's release consolidates Arthur's agent development lifecycle into a unified, enterprise-ready platform. One place to experiment. One place to monitor. One place to govern.

Consolidating your workflow chaos into streamlined experiences that lay the foundation for enterprise-scale governance that actually works.

Enterprise-Grade Policy Management

Compliance teams know the pain: manually tracking model governance across dozens of deployments. No standardized way to apply organization-wide policies. Attestation requirements that live in spreadsheets instead of systems.

Introducing a new Policy Management Framework that transforms compliance from reactive chasing to proactive governance. Create organization-level policies with alert rules and attestation requirements. Apply them systematically across model portfolios. Track compliance state with automatic enforcement delays and grace periods.

- Reusable policy templates. Define governance rules once, apply across multiple models and workspaces.

- Attestation workflows. Built-in compliance tracking with validity periods and renewal requirements.

- Enforcement controls. Configurable grace periods before policy violations trigger actions.

Compliance teams get systematic oversight. PMs get clear policy requirements. Everyone gets AI governance that scales with your portfolio.

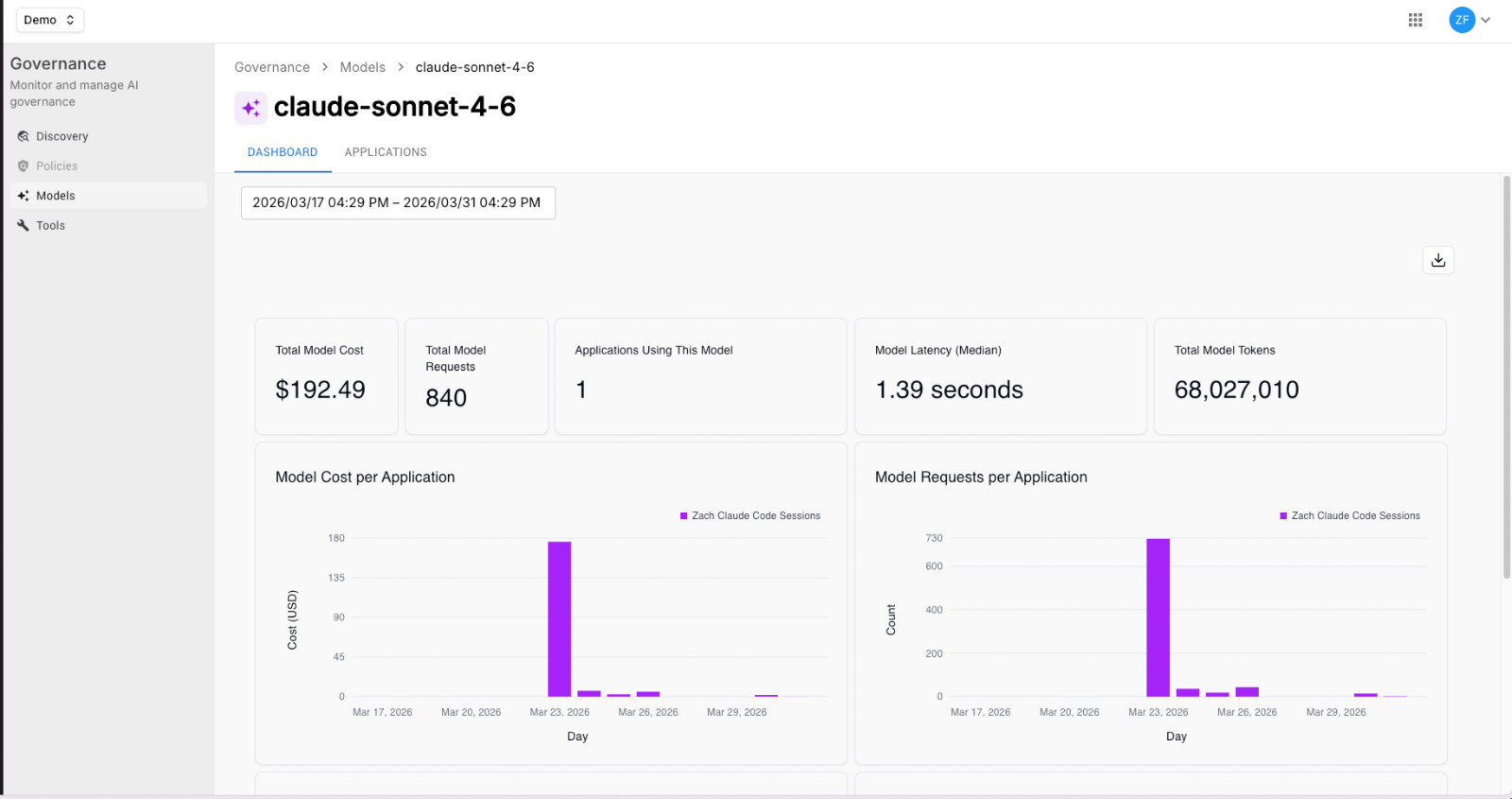

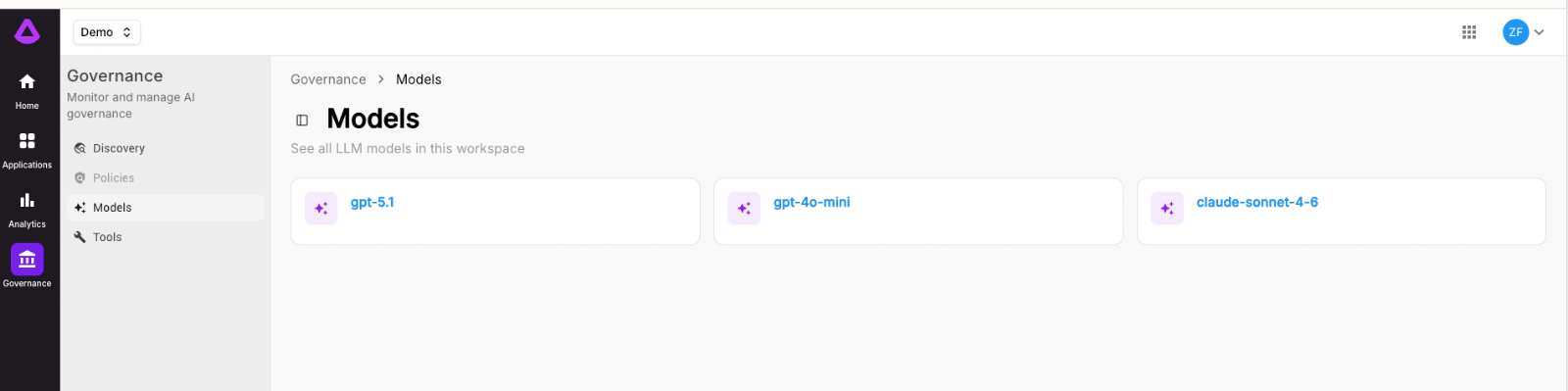

Agent Resource Graph

You've got agents running in production, each wired to different LLM models, tools, sub-agents, and environments. But when someone asks "what LLM models are we actually using across the organization?" the answer lives within a dozen config files.

The new Agent Resource Graph gives you a catalog of every LLM model and tool that every agent in your organization is using all in one place. See the full topology of your agent ecosystem: which models power which agents, which tools are shared across teams, and where your infrastructure dependencies actually live.

- Organization-wide model inventory. A single view of every LLM model in use across all agents, teams, and environments.

- Tool mapping. Understand which tools are being called, how often, and by which agents.

- Dependency visibility. Spot shared dependencies, redundant tool usage, and single points of failure before they become production incidents.

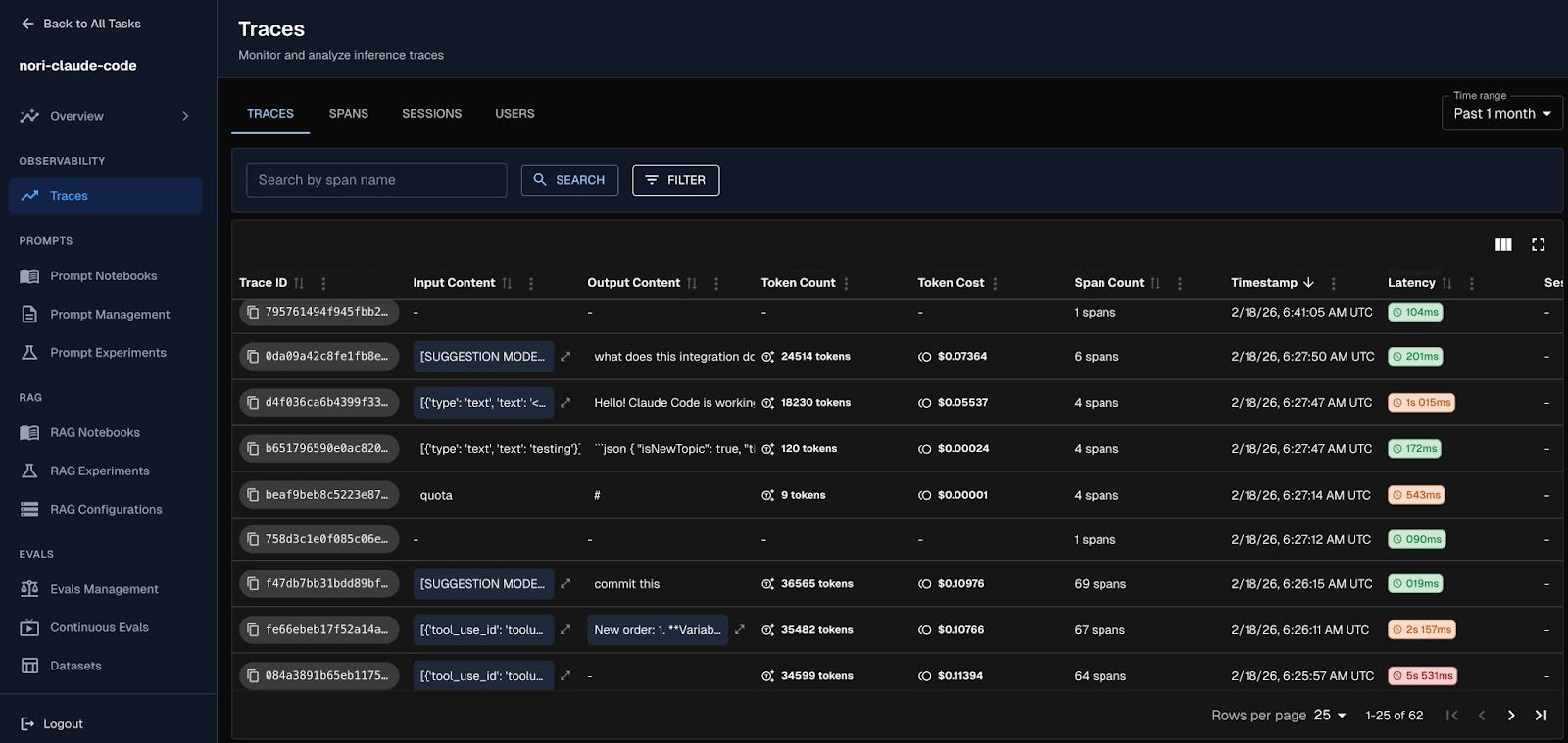

Built-in Claude Code Integration

Your developers are already using Claude Code for agentic coding workflows. But every prompt, tool call, and LLM interaction inside those sessions? A black box. You can't see what Claude Code is actually doing, how many tokens it's burning, or where failures happen.

Arthur Engine now ships with a built-in Claude Code integration that traces every Claude Code session as OpenInference spans. Every user prompt becomes a trace containing the tool calls Claude made and the LLM API calls it used to respond giving you full observability into agentic coding workflows.

- Full session tracing: Every user prompt creates a trace with tool calls, LLM spans, retriever operations, and sub-agent invocations — including failures.

- Quick setup: Install globally to trace all Claude Code sessions, or scope it to a specific project. A single install.sh and you're up.

- GitHub Actions ready: Drop in the included workflow files for automated PR review and interactive Claude on issues — with traces sent straight to Arthur Engine.

- Zero-impact when unconfigured: If credentials aren't set, the tracer silently does nothing — safe to install in shared projects and CI pipelines.

Learn more about the integration here.

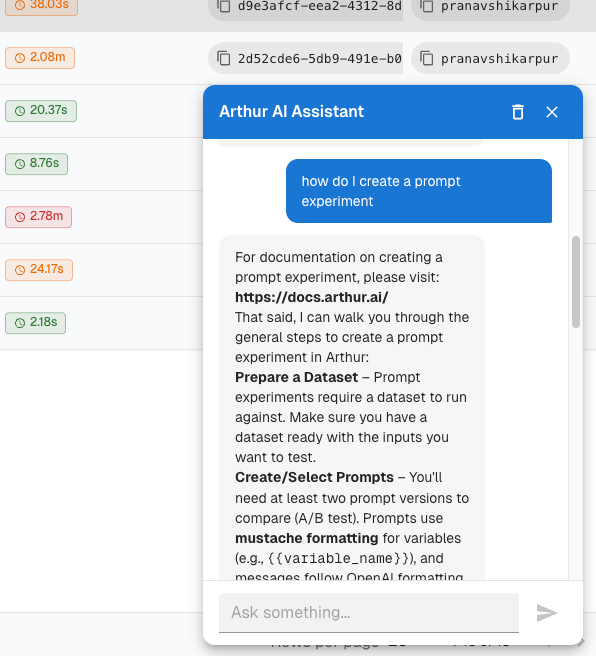

Engine Assistant

We built the Arthur Engine with ease-of-use in mind, but we recognize that learning how to set up up agent experiments, continuous evals, and more can be tedious, so we built an assistant right inside the engine.

The new Engine Assistant is a built-in chatbot that lives right inside Arthur Engine, ready to help you seamlessly set up tracing, evaluations, experiments, and more through natural conversation. Instead of hunting through documentation, just ask.

- Ask anything, or ask it to do anything: Get instant answers about Arthur Engine features, APIs, and best practices or tell it to create new prompts, experiments, and evaluations.

- Automated setup through conversation: Instead of clicking through configuration screens, describe what you want in natural language. The assistant creates and configures resources in the platform on your behalf using the engine’s APIs.

- Context-aware guidance: The assistant understands where you are in the product, so it can answer questions about what you're looking at or take action right where you are without you ever leaving your workflow.

Evaluation That Works In Your Workflow

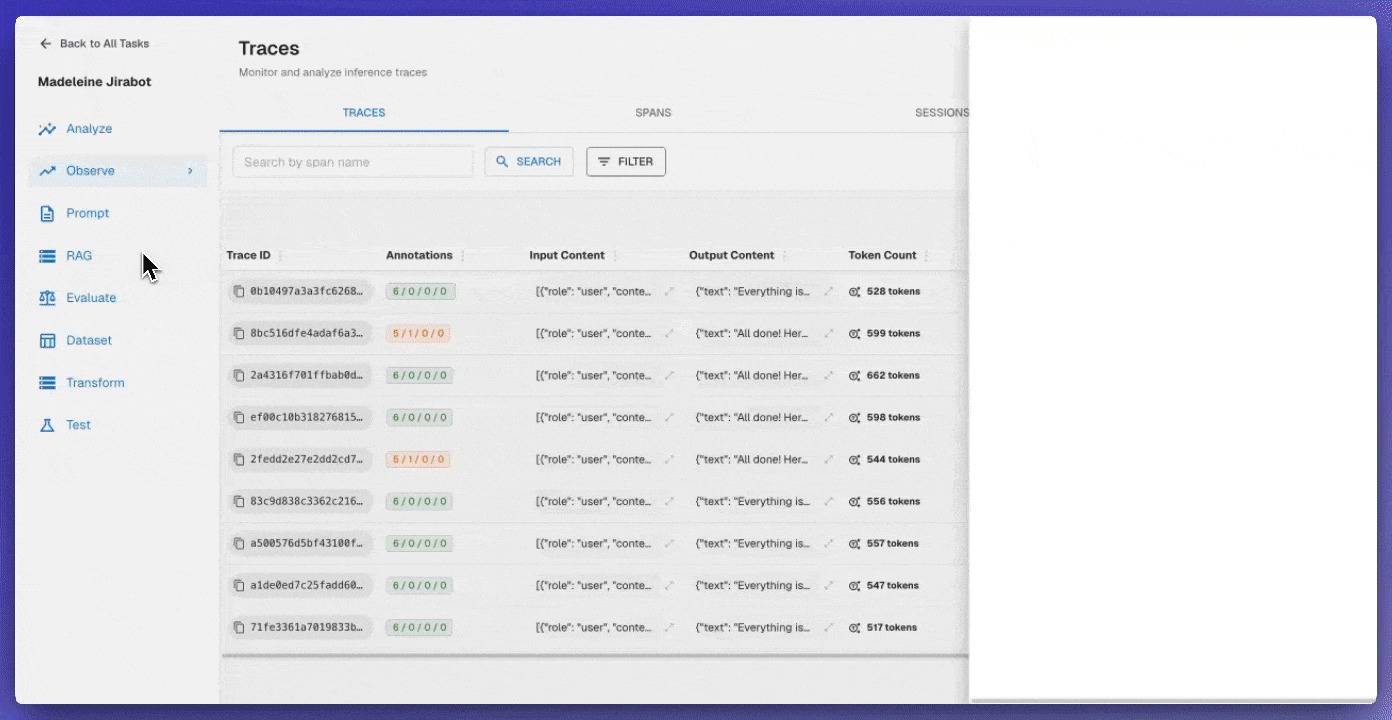

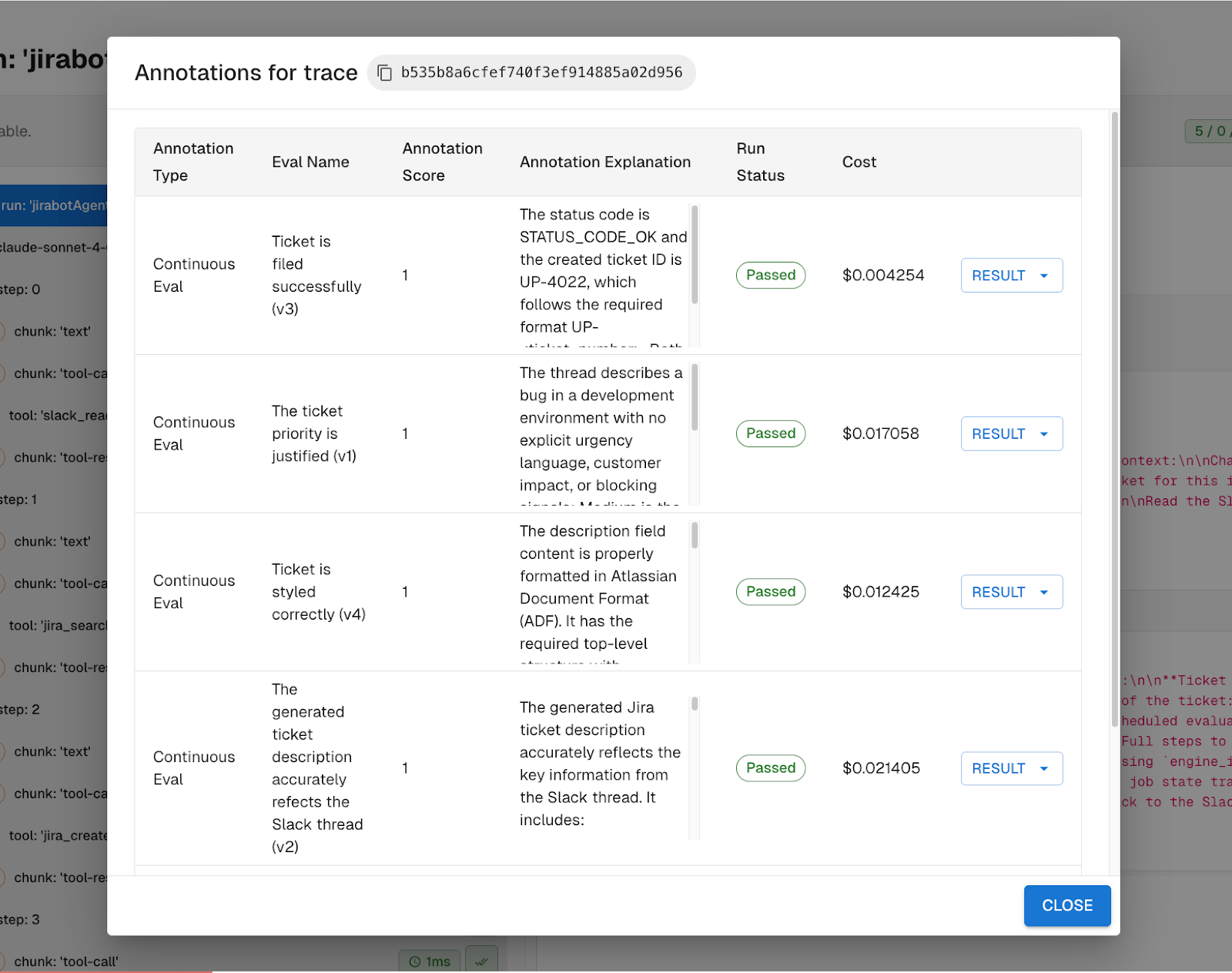

Running evaluations shouldn't require a PhD in Arthur's interface design. Creating continuous evals from traces shouldn't mean copying and pasting cryptic span selectors. Understanding why an evaluation failed shouldn't require a separate debugging session.

Visual Span Selection puts evaluation creation where debugging happens:

- Point-and-click eval setup. Select data directly from trace viewer instead of writing span selectors by hand.

- Inline continuous evals. Create evaluations side-by-side with span inspection, then submit without losing your analysis context.

- Clickable trace IDs. Jump from experiment results to full trace context with one click.

Your developers spend less time configuring and more time understanding. Your evaluation coverage improves because creating evals becomes part of debugging, not a separate chore.

Agent Task Management That Scales With Your Portfolio

You have agents in production. More launching next week. Some experimental, some business-critical. Right now, finding the failing one means scrolling through an unsorted list and guessing which traces matter. It’s like searching your email without folders.

Enhanced task management brings operational clarity to agent management:

- Smart filtering and archival. Hide deprecated experiments without losing their data, surface active agents with performance sorting. Find tasks by activity window, status, or any combination of criteria

- Rich agent metadata. See tools, sub-agents, models, and infrastructure at a glance without clicking through configuration screens.

- Task ownership mapping. Automatic service name detection connects running agents to responsible teams.

For PMs: you get real visibility into which agents are performing and which teams own them. For developers: you find the failing agent in seconds, not minutes. For governance: you have an inventory of production agents with clear ownership trails.

Unified Navigation That Actually Makes Sense

Product teams waste hours navigating between scattered interfaces. Your developers lose context switching between trace viewers, experiment runners, and evaluation dashboards. Your stakeholders can't find the insights they need when they need them.

A new consolidated interface transforms how you work with agents:

- Single-entry navigation. RAG, Prompts, and Evaluations each get unified tabbed interfaces instead of scattered menu items.

- Contextual workflows. Create evaluations directly from trace viewer without losing your debugging context.

- Consistent theming. Dark mode that actually works, with proper contrast and unified styling across every component.

For PMs: your team stops losing time in interface archaeology. For developers: context switching becomes deliberate, not accidental. For compliance: one place to audit agent behavior across all experiments and deployments.

From scattered debugging to unified insights and workflows. From reactive compliance to proactive AI governance. This release moves you from tool chaos to systematic control.

From experimental interfaces to enterprise workflows. From agent complexity to operational clarity.

Arthur's agent development lifecycle now works the way your team thinks: experiment, deploy, monitor, govern. All in one place. All with the reliability your production systems demand.

PS — Reply directly with any feedback at ashley@arthur.ai. See the full platform release notes for March 2026 here.