Model monitoring is key for having continually high-performing artificial intelligence models in place. As we know, AI models make predictions based on historical data. However, as the world changes, that historical data becomes less and less relevant, which can lead to incorrect predictions and business outcomes . It’s important to understand the ways in which models fail, so you can become aware the moment it happens, and update your models to reflect the new conditions in production . To help with understanding how model monitoring can be put into use, we’ve outlined 3 of the top ways data issues can cause AI performance loss below.

1. A Changing World

With a few exceptions, machine learning models do not learn on their own. Businesses rely on humans and automated systems to deploy updates. And while the world naturally changes and evolves, your model can fall more and more out of tune with reality . This is data drift, and it can happen in a few different ways .

First, a models may be inadvertently used in ways that data scientists did not intend. For instance, suppose your business sells skincare products targeted towards a more mature clientele, and you use AI to manage your ad spend. Your data is likely built up using demographic information about your original age target. However, if you launch a new product targeted towards teens and begin using those models without updating the training data, your advertising will likely underperform.

Second, the world isn’t static -- it evolves, constantly. This means past data points aren’t always good indicators for future predictions. For example, let’s say you run an app with a built-in messaging feature. You may implement a model to predict and suppress spam in order to improve the user experience. However, over time, spammers will likely figure out what your model looks for and change their behavior to subvert your models.

2. Extreme Novel Events

Good and bad novel moments happen all the time - we’re living through one right now with COVID19. And these events can have a large impact on businesses. As a lighter example, there’s the event of sudden, and unexpected popularity .

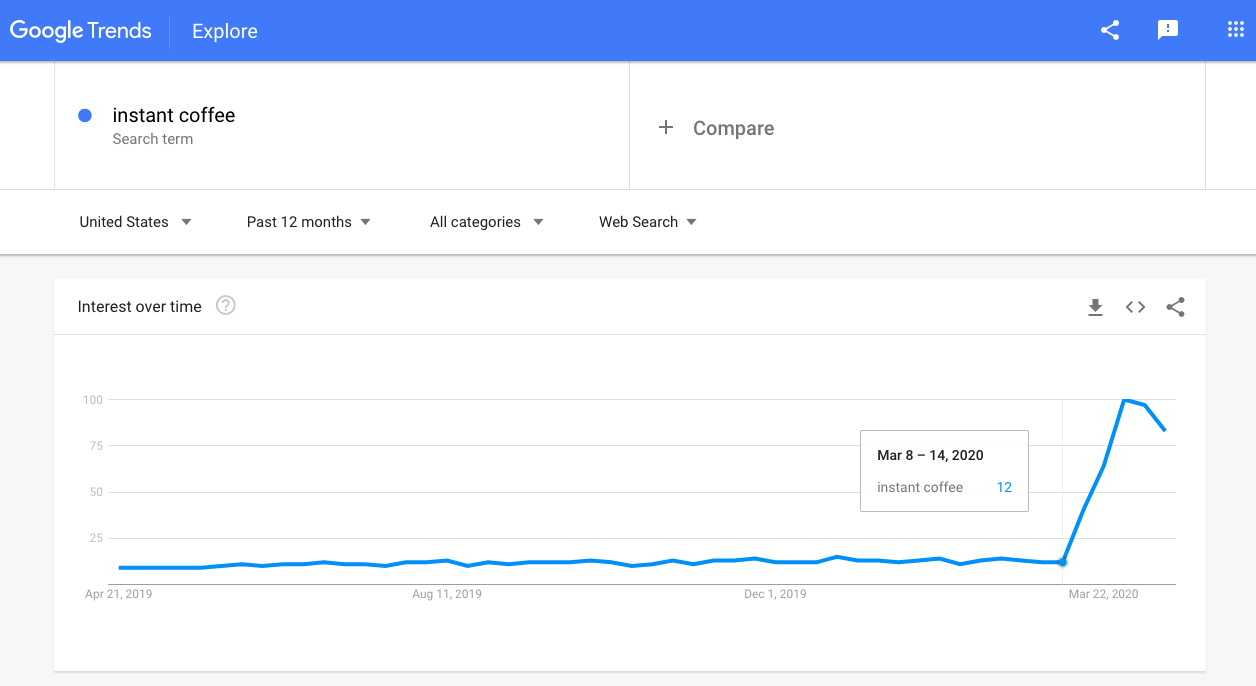

Take, for example, dalgona coffee. Thanks to this viral TikTok coffee trend, searches for instant coffee on Google grew by more than 700% in about a week.

Retailers online and offline often have models that use historical data to predict how much stock they should carry of any given product, but that data doesn’t take into account sudden trends - meaning lots of customers found themselves frustrated at the lack of stock available for purchase and tons of missed revenue for the business. Model monitoring, here, could have given these retailers advance notice of the incoming surge in purchases.

3. Data Ecosystem Changes

Usually, you don’t just have one model feeding into one outcome. Instead, models feed into more models to make much more complex predictions and decisions. This means if you change even something seemingly innocuous like going from nulls to 0s in one model, it can have a big impact in the performance of your whole system. In many cases, changes may not be immediately catastrophic. However, these quieter inaccuracies are often what keep data scientists up at night because they’re much harder to spot and can cause huge monetary losses.

Here, model monitoring is vital to catch those subtle (yet powerful) changes that would be otherwise overlooked. Those small changes and inconsistencies are easy to miss, but having a model monitoring system in place provides protection from data changes leading to model failures and lost revenue.

Today, AI is vital for growing and scaling businesses. But we must be cognisant that AI is still quite brittle . Understanding the ways in which AI models can lose accuracy enables your business to monitor and address those issues before they cause real problems for your team. These guardrails ensure you will deploy a safe and reliable AI system within your business and get the most out of the models you’ve spent so much effort to deploy into production.

Have questions about model monitoring? Shoot us an email at info@arthur.ai

FAQ:

Why is AI Performance Monitoring essential for businesses?

AI Performance Monitoring is crucial for businesses because it helps maintain the accuracy and reliability of AI models. By detecting and addressing performance issues early, businesses can avoid incorrect predictions, reduce risks, and ensure optimal decision-making, leading to better business outcomes.

How does AI Performance Monitoring help with data drift?

AI Performance Monitoring helps with data drift by continuously analyzing the data that the AI model processes and comparing it to the historical data used during training. When significant deviations are detected, the monitoring system can alert data scientists to update the model, ensuring it remains relevant and accurate.

What tools are commonly used for AI Performance Monitoring?

Common tools for AI Performance Monitoring include software platforms like Arthur.ai, which provide comprehensive monitoring solutions. These tools offer features such as anomaly detection, performance analytics, and alerts for data drift and model degradation, enabling businesses to maintain high-performing AI systems.

How can AI Performance Monitoring improve model reliability during extreme events?

AI Performance Monitoring can improve model reliability during extreme events by quickly detecting sudden changes in data patterns, such as those caused by novel or unexpected events. By identifying these changes early, businesses can adjust their models to better handle the new conditions, ensuring continued accuracy and performance.

What are the benefits of implementing AI Performance Monitoring in a data ecosystem?

Implementing AI Performance Monitoring in a data ecosystem provides protection against subtle data changes that can lead to model failures and lost revenue. It helps in catching inconsistencies and ensuring that the interconnected models within the system continue to function accurately, maintaining overall system performance.